Engaging with Open Source Technologies

Engaging with Open Source Technologies

These are the presentation notes for the

Engaging with Open Source Technologies

presentation during the

Open Source Publishing Technologies: Current Status and Emerging Possibilities

webinar on Wednesday, August 14, 2019.

Webinar Description

This session will focus on discussions of open source publishing platforms and systems. What is the value proposition? What functionalities are commonplace? Where are the pitfalls in adoption and use by publishers or by libraries? What potential is there for scholarly societies who are similarly responsible for publication support and dissemination? Given the rising interest in open access and open educational resources, this session will offer professionals a sense of what is available, a sense of practical concerns and a general sense of their future direction.

Talk Abstract

An open source project that focuses only on the code is missing out on some of the biggest opportunities that the open source philosophy offers. To be sure, developing software with an open source philosophy brings a diversity of knowledge and shares the development burden over a wide group. But a community that embraces that philosophy in the conception, design, specification, and development of a project can build exceptionally useful software and a fulfilling experience for all involved. This portion of the program explores some of the structures and processes found in successful open source communities using examples from projects inside and outside of field.

Slides

PDF of slides

Resources

Arp, Laurie Gemmill, and Megan Forbes. “It Takes a Village: Open Source Software Sustainability,”

LYRASIS

, February 2018.

https://doi.org/10.7916/D89G70BS

Fitzgerald, Brian. (2006). “The Transformation of Open Source Software.”

MIS Quarterly

,

30

(3), 587.

https://doi.org/10.2307/25148740

Maxwell, John W,

et al

«Mind the Gap: A Landscape Analysis of Open Source Publishing Tools and Platforms,» July 2019.

https://mindthegap.pubpub.org/

Photo/Illustration Acknowledgments

Slide 1: “

Codex Claustroneoburgensis 980

” from College of Saint Benedict & Saint John’s University via DPLA

Slide 10: “

Agile Project Management by Planbox

” via Wikimedia Commons

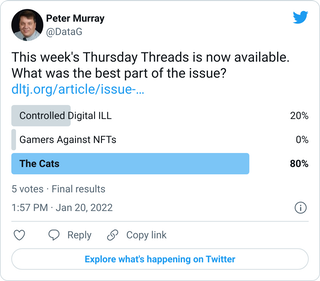

Slide 15: “

kiyomi gets chin scratches in PHX airport pet relief area

” by Taro the Shiba Inu via Flickr

Slide 16: “

Sunset

” from the National Archives and Records Administration via DPLA

Key Quotations from Resources

Brian Fitzgerald in 2006 wrote of a significant shift in how open-source software projects were being considered and operated. Fitzgerald noted that the rise of successful open-source software (which he called “OSS 1.0”) was characterized by self-organized, Internet-based projects that gathered loose communities around sheer willingness to participate. Fitzgerald identified a newer mode, which he called “OSS 2.0,” characterized by “purposeful design” and institution-sponsored “vertical domains,” and much more likely to include paid deve…